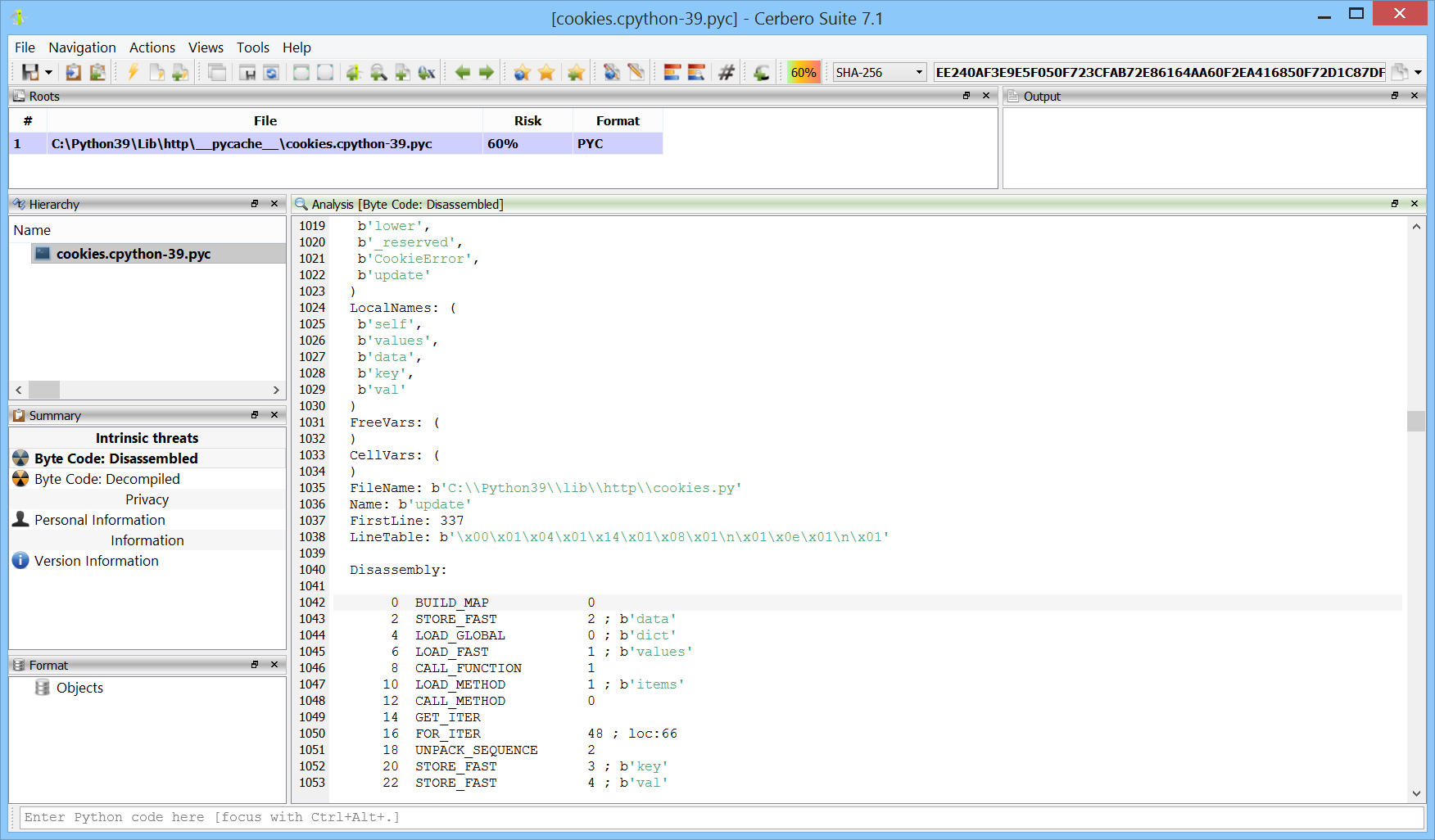

We have released the PYC Format package for all licenses of Cerbero Suite.

PYC files are compiled bytecode versions of Python source code. These compiled files can be deployed in place of the original source code, serving as a bytecode format for execution by the Python interpreter. PYC files are tied to the specific version of Python they were compiled with, necessitating recompilation when different Python versions are used.